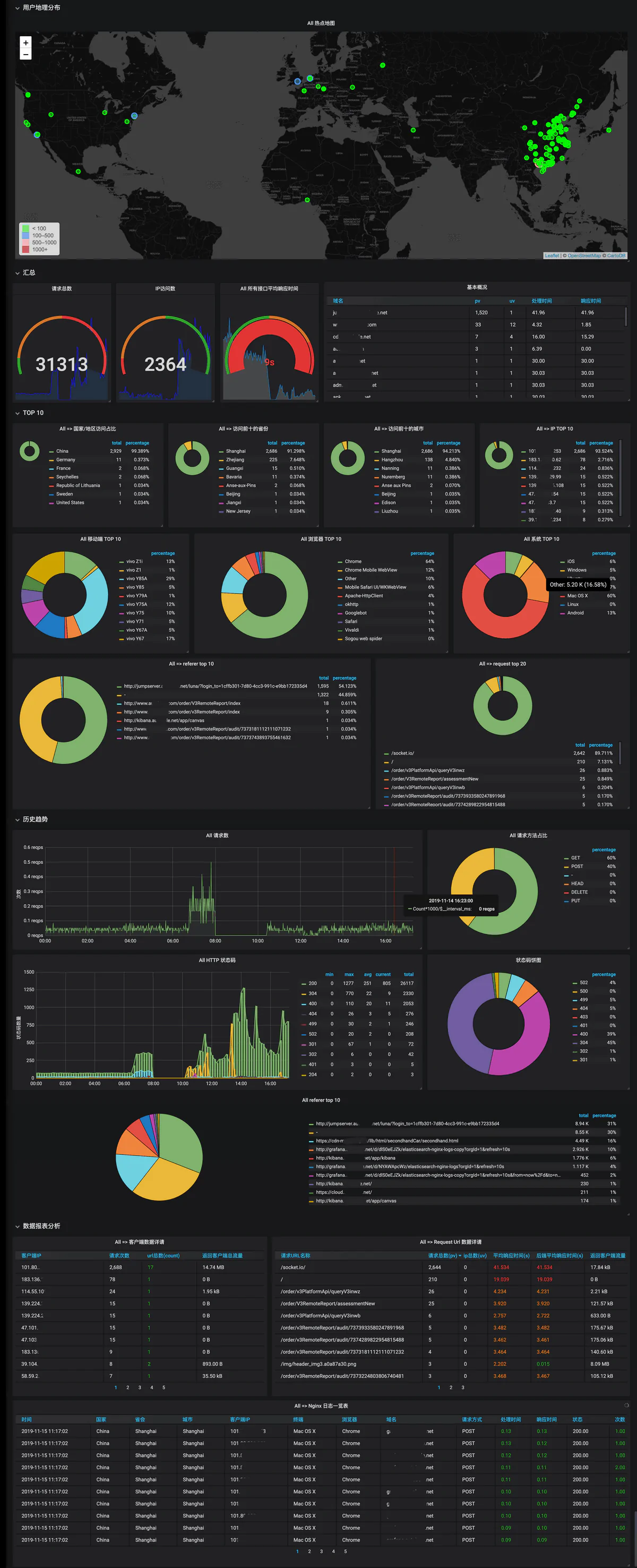

看日志是很麻烦的事情,作为一个运维(摸鱼)工程师,就要是把繁琐的事情简单化,标准化,慢慢的取代繁琐的操作,本文简单的写一下通过ELK系统对Nginx日志分析并可视化的部署过程,先上效果图:

话不多说,开始部署:

需要的相关组件:

Nginx

Elasticsearch 搜索分析引擎

Logstash 数据收集引擎

Grafana 对Elasticsearch里的数据展示

1.修改Nginx 日志格式:

#vim nginx.conf #在http内添加如下

log_format nginx_log

'{"@timestamp":"$time_iso8601",'

'"host":"$hostname",'

'"server_ip":"$server_addr",'

'"client_ip":"$remote_addr",'

'"xff":"$http_x_forwarded_for",'

'"domain":"$host",'

'"url":"$uri",'

'"referer":"$http_referer",'

'"args":"$args",'

'"upstreamtime":"$upstream_response_time",'

'"responsetime":"$request_time",'

'"request_method":"$request_method",'

'"status":"$status",'

'"size":"$body_bytes_sent",'

'"request_body":"$request_body",'

'"request_length":"$request_length",'

'"protocol":"$server_protocol",'

'"upstreamhost":"$upstream_addr",'

'"file_dir":"$request_filename",'

'"http_user_agent":"$http_user_agent"'

'}';

access_log logs/access.log nginx_log #改成上面添加的名称

保存后重载Nginx

nginx -s reload2.搭建Elasticsearch单机

- elasticsearch需要java环境,本文不再赘述

- elasticsearch官网下载安装包 https://www.elastic.co/cn/downloads/elasticsearch

- 修改系统相关参数

echo "vm.max_map_count=655360" >>/etc/sysctl.conf

sysctl -p

vim /etc/security/limits.conf

* soft core unlimited

* hard core unlimited

* soft nofile 1048576

* hard nofile 1048576

* soft nproc 65536

* hard nproc 65536

* soft sigpending 255983

* hard sigpending 255983

* soft memlock unlimited

* hard memlock unlimited

vim /etc/security/limits.d/20-nproc.conf

* soft nproc 65536

* hard nproc 65536解压,修改配置文件并启动

vim config/elasticsearch.yml

cluster.name: test

node.name: node1

discovery.seed_hosts: []

cluster.initial_master_nodes: ["node1"]

path.data: /opt/data/elasticsearch/ #数据目录

path.logs: /opt/logs/elasticsearch/ #日志目录

bootstrap.memory_lock: true

network.host: 0.0.0.0

vim config/jvm.options

-Xms4g #最佳配置为系统内存的一半

-Xmx4g #

添加用户:

useradd elastic

切换到elastic用户

su elastic -

启动elasticsearch

bin/elasticsearch -d3.安装Logstach

- logstash需要java环境,本文不再赘述

- logstash官方下载安装包 https://www.elastic.co/cn/downloads/logstash 并解压

- 下载geoip数据库 https://dev.maxmind.com/geoip/geoip2/geolite2/ 并解压到logstash目录

- 修改相关配置并启动

vim nginx.yml

input {

file {

path => ["/var/log/nginx/access.log"] #nginx日志目录

}

}

filter {

geoip {

#multiLang => "zh-CN"

target => "geoip"

source => "client_ip"

database => "/opt/logstash/GeoLite2-City.mmdb" #geoip数据库路径

add_field => [ "[geoip][coordinates]", "%{[geoip][longitude]}" ]

add_field => [ "[geoip][coordinates]", "%{[geoip][latitude]}" ]

# 去掉显示 geoip 显示的多余信息

remove_field => ["[geoip][latitude]", "[geoip][longitude]", "[geoip][country_code]", "[geoip][country_code2]", "[geoip][country_code3]", "[geoip][timezone]", "[geoip][continent_code]", "[geoip][region_code]"]

}

mutate {

convert => [ "size", "integer" ]

convert => [ "status", "integer" ]

convert => [ "responsetime", "float" ]

convert => [ "upstreamtime", "float" ]

convert => [ "[geoip][coordinates]", "float" ]

# 过滤 filebeat 没用的字段,这里过滤的字段要考虑好输出到es的,否则过滤了就没法做判断

remove_field => [ "ecs","agent","host","cloud","@version","input","logs_type" ]

}

# 根据http_user_agent来自动处理区分用户客户端系统与版本

useragent {

source => "http_user_agent"

target => "ua"

# 过滤useragent没用的字段

remove_field => [ "[ua][minor]","[ua][major]","[ua][build]","[ua][patch]","[ua][os_minor]","[ua][os_major]" ]

}

}

output {

elasticsearch {

hosts => "http://192.168.25.70:9200" #elasticsearch 地址

index => "logstash-nginx-%{+YYYY.MM.dd}"

}

}

启动logstash

bin/logstash -f /opt/logstash/nginx.yml &4.安装Grafana

Ubuntu or Deban:

sudo apt-get install -y adduser libfontconfig1

wget https://dl.grafana.com/oss/release/grafana_7.2.0_amd64.deb

sudo dpkg -i grafana_7.2.0_amd64.deb

Centos:

wget https://dl.grafana.com/oss/release/grafana-7.2.0-1.x86_64.rpm

sudo yum install grafana-7.2.0-1.x86_64.rpm- 安装后打开ip:3000 默认密码admin/admin 进入后修改密码

- 设置-数据源-添加数据源,选择Elasticsearch

- 配置elasticsearch地址,index索引名称等

- 添加可视化面板

导入id填 11190 并点击load

导入id填 11190 并点击load 数据源选择刚刚添加的Elasticsearch,并点击import

数据源选择刚刚添加的Elasticsearch,并点击import 完成后如果前面配置没有什么问题,就可以看到数据了,也可以根据数据自己动手添加图表

完成后如果前面配置没有什么问题,就可以看到数据了,也可以根据数据自己动手添加图表

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。